Filebeats nginx access log3/14/2023

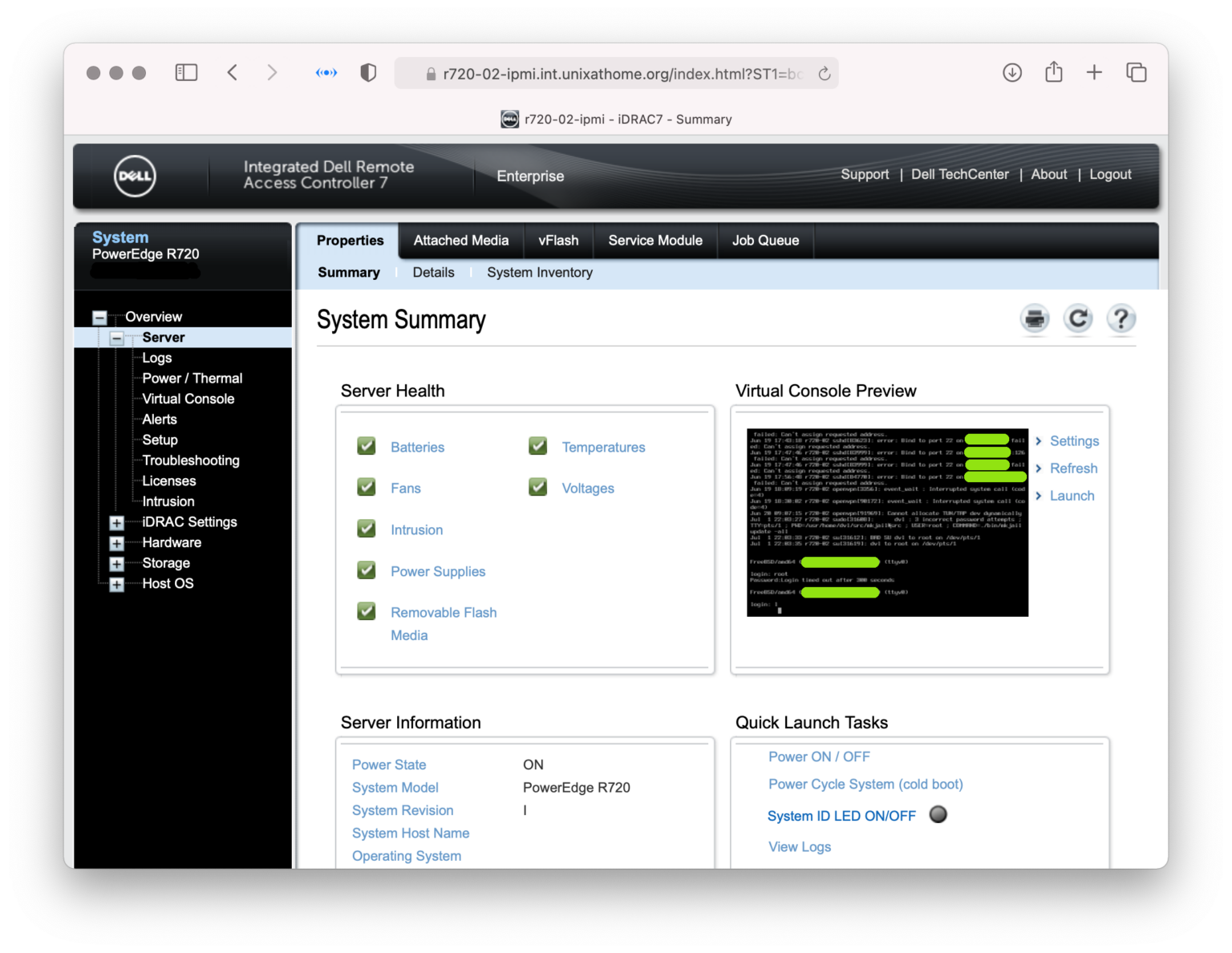

If you use the default log location, it would be segmentedĭefault load path error]# /etc/init.d/filebeat status If you customize the log directory, it would not be segmented. The default filters Filebeat hat put there gave me zero hits because the original fields didn't exist in my Elasticsearch index.įor the complete setup, as always TheAwesomeGarage has you covered on GitHub.Built a set of elk by myself 7.x Version environment found that using filebeat to collect nginx or apapche could not customize the directory log directory. For example, on the SSH dashboard, In order to visualize successful and failed authentication attempts, I had to change the filters to event.outcome:success and event.outcome:failure on the respective dashboards. I had to make minor adjustments to the queries made by some of the default dashboards for them to give me results. After that, your logs should start ticking in. What is really important when you start your stack the first time is to run the Filebeat setup then restart Kibana once. If you want other types of logs, like slowlogs, it seems mounting is the way to do it. Normally, you'd use docker logs, but from docker logs you get stdout and stderr. I mount the log folder of a mariadb instance into Filebeat because that was the easiest way I found to make Filbeat fetch the logs from an external docker container.I point Filebeat to my Kibana installation, in order for Filebeat to set up great default dashboards and point Kibana to the Elasticsearch server.This was IMPORTANT for me in order to avoid problems indexing later on after I alter modules. I say that for each setup I run from Filebeat, I overwrite templates in Elasticsearch.I set up autodiscover and override the default file location for the mariadb slowlogs for my nextcloud-db container.In /usr/share/filebeat/filebeat.yml I do several things.I override /usr/share/filebeat/module/system/syslog/manifest.yml and /usr/share/filebeat/module/system/auth/manifest.yml because Filebeat doesn't seem to get syslog and auth right when the host is Ubuntu 18.04.Filebeat contains a few variants, but I needed to add this one. I override /usr/share/filebeat/module/apache/access/ingest/default.json because an image I run has altered the default Apache access log format.Filebeat contains a few variants, but I needed to add my own. I override /usr/share/filebeat/module/nginx/access/ingest/default.json because I've altered the default Nginx access log format.But Filbeat needs quite som attention in order to ship logs that Elasticsearch can ingest in a structured manner. Elasticserach and Kibana are both "fire and forget" in the docker-compose setup context. The really interesting part here is Filebeat. Note how I customize the configs It's by bind-mounting config files to replace the default/shipped versions. Elasticsearch is set up in a single node cluster - because that's all I need.Note that I've also set up Basic Auth on the Kibana vhost (server block) in order to protect my logs. You can see how I set up Kibana to be available to the Internet via my Nginx setup.There are quite a few takeaways here, compared to what you see in traditional tutorials with only the standard examples. nextcloud-db-logs:/mnt/nextcloud-db-log:ro var/lib/docker/containers/:/var/lib/docker/containers/:ro var/run/docker.sock:/var/run/docker.sock LETSENCRYPT_EMAIL=$/filebeat/filebeat.yml:/usr/share/filebeat/filebeat.yml:ro See an extract from my docker-compose.yml: kibana: So - how did I set it up? What caveats have I encountered? But it'd probably be to learn, not because I need it. I guess at some time, when I'd like to dive into Logstash and learn about its potential, I might install it. Sure, professional users will benefit from Logstash, but you pay a price that I'm not (yet) willing to pay. What are the latest errors, filtered per server or domain?.

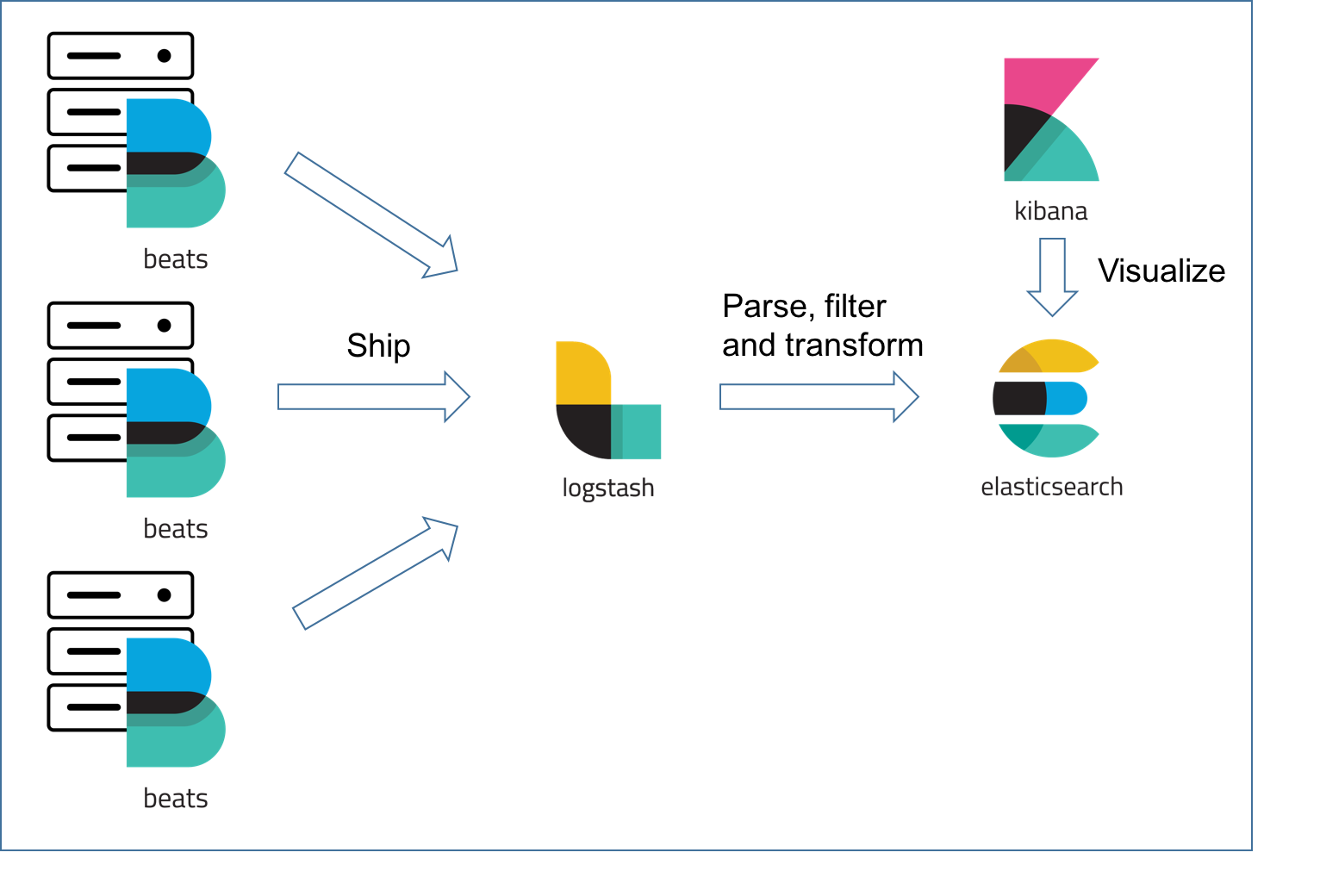

Most popular URLs, and aggregations per server, domain, etc.From where do people visit my websites based on both Apache and Nginx? Beautifully visualized on a map.From where do people try to log on my SSH, and what usernames are most frequent?.In my setup, I'm using Filebeat to ship logs directly to Elasticsearch, and I'm happy with that. I won't mention Metricbeat for now, because metrics and monitoring are covered with Prometheus and Grafana (for now). Logstash will hog lots of resources! For simple use cases, you'll probably manage perfectly well without Logstash, as long as you have Filebeat. However, if you have limited computational resources and few servers, it's probably overkill. When it comes to centralized logging, the ELK stack (Elasticsearch, Logstash and Kibana) often pops up.

0 Comments

Leave a Reply.AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed